- Blog

- Www mozypro

- Robot arm arduino

- Planet of cubes survival craft online

- Killer instinct wiki t7sk

- Pdfkey pro 4-1-4 mac

- Northeast radar in motion

- Openaudible cant download red

- Lightworks video editor download

- Hazel eyes vs green eyes

- Merchants of kaidan monastery

- Bloons td battles hacks for pc

- Nier replicant sinoalice

- Memory dim3

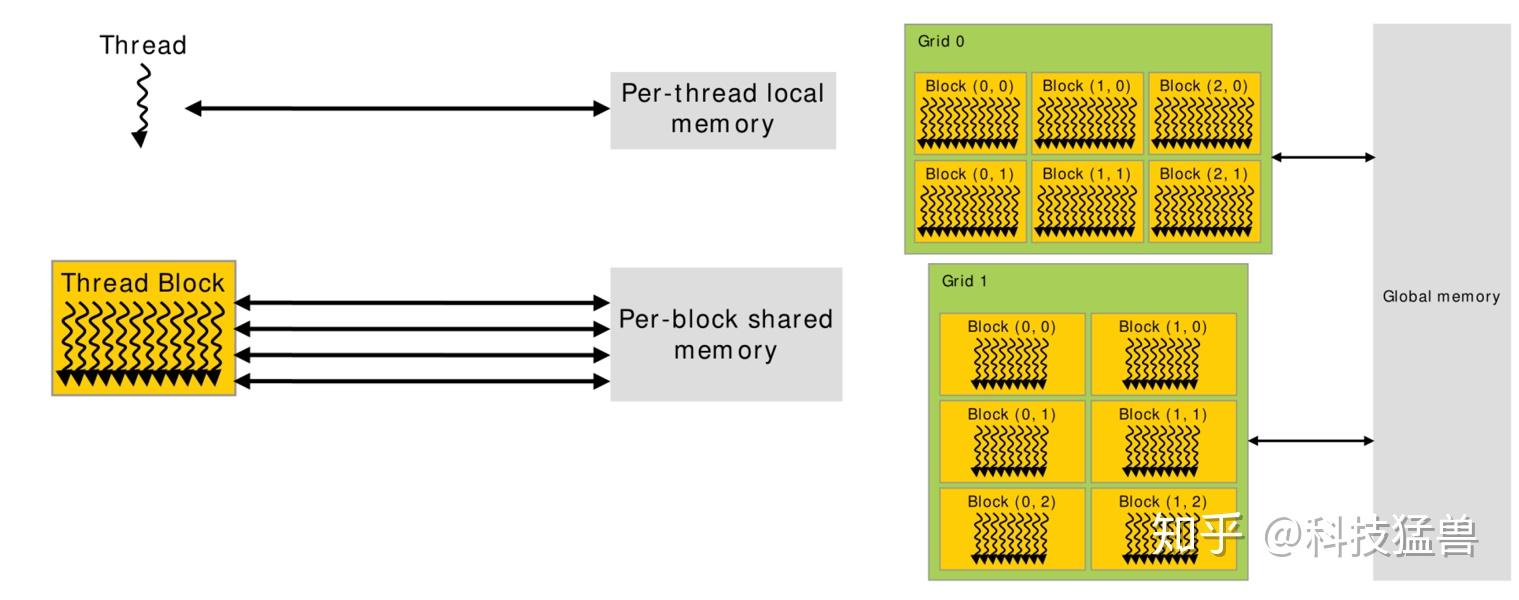

The GPU is called a device and GPU memory likewise called device memory. The system memory associated with the CPU is called host memory. The shape argument is similar as in NumPy API, with the requirement that it must contain a constant expression. The host is the CPU available in the system. The return value of is a NumPy-array-like object. #define pos2d(Y, X, W) ((Y) * (W) + (X)) const unsigned int BPG = 50 const unsigned int TPB = 32 const unsigned int N = BPG * TPB _global_ void cuMatrixMul ( const float A, const float B, float C ) Direct Memory Access (DMA) copy engine runs CPU-GPU memory transfers in background Requires page-locked memory Some Tesla GPUs have 2 DMA engines or more: simultaneous send + receive + inter-GPU communication Concurrent kernel execution Start next kernel before previous kernel finishes Mitigates impact of load imbalance / tail effect a block 0.

As a programmer, we can modify our variable declarations with the CUDA C keyword shared to make this variable resident in shared memory. This region of memory brings along with it another extension to the C language akin to device and global. Write by the host and slower to write by the device. CUDA C makes available a region of memory that we call shared memory. To write by the host and to read by the device, but slower to

#Memory dim3 portable#

portable – a boolean flag to allow the allocated device memory to be.mapped_array ( shape, dtype=np.float, strides=None, order='C', stream=0, portable=False, wc=False ) ¶Īllocate a mapped ndarray with a buffer that is pinned and mapped on pinned_array ( shape, dtype=np.float, strides=None, order='C' ) ¶Īllocate a numpy.ndarray with a buffer that is pinned (pagelocked). device_array ( shape, dtype=np.float, strides=None, order='C', stream=0 ) ¶Īllocate an empty device ndarray. This book is a collection of memories from those who knew and loved Courtney. The following are special DeviceNDArray factories: numba.cuda. A Memory Is a Dime of Infinite Value : Turner, Aundrea L: Amazon.sg: Books. copy_to_host ( ary=None, stream=0 ) ¶Ĭopy self to ary or create a new numpy ndarray copy_to_host ( stream = stream ) DeviceNDArray.